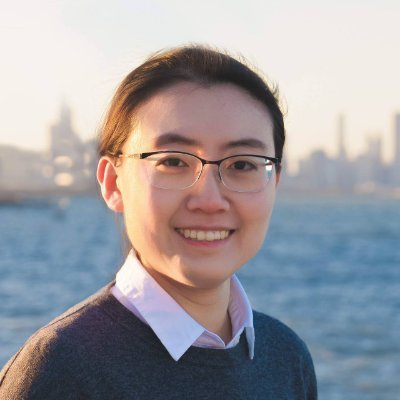

@fredahshi

Assistant Professor @UWCheritonCS & Faculty Member @VectorInst PhD in CS from @TTIC_Connect BS from @PKU1898 , Ex- @MetaAI , @GoogleDeepMind Feeder of 3 🐈

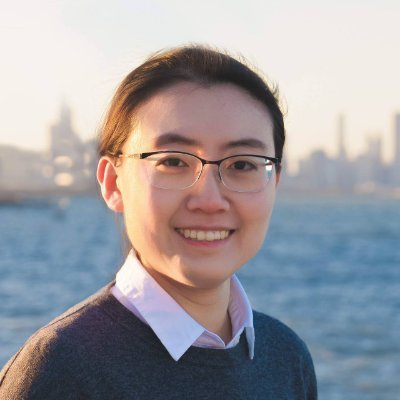

@fredahshi

Assistant Professor @UWCheritonCS & Faculty Member @VectorInst PhD in CS from @TTIC_Connect BS from @PKU1898 , Ex- @MetaAI , @GoogleDeepMind Feeder of 3 🐈