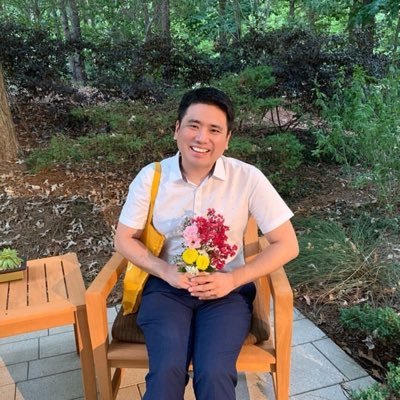

@_jongwook_kim

Member of Technical Staff @OpenAI , authored CLIP and Whisper; previously at @nyuMARL , @SpotifyResearch , @pandoramusic , @kakaocorpglobal , and @NCSOFT

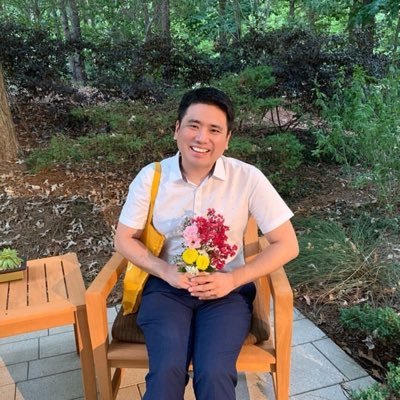

@_jongwook_kim

Member of Technical Staff @OpenAI , authored CLIP and Whisper; previously at @nyuMARL , @SpotifyResearch , @pandoramusic , @kakaocorpglobal , and @NCSOFT