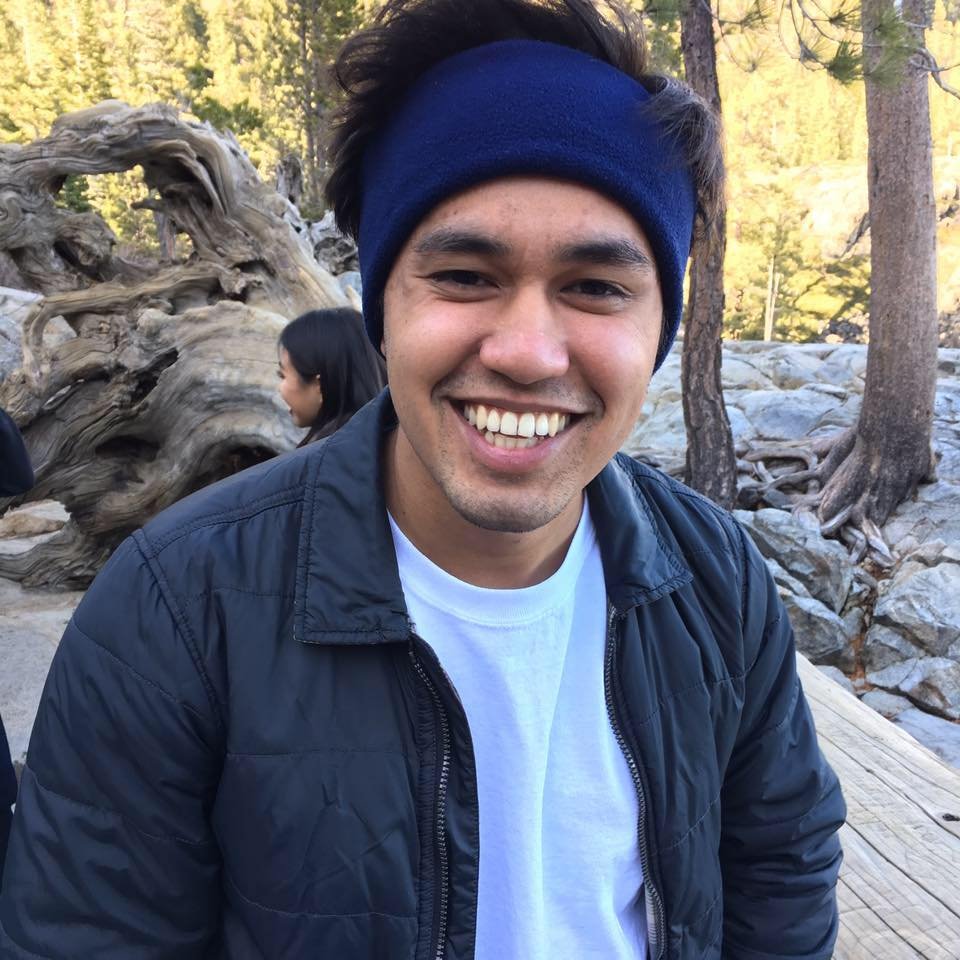

@TheRealRPuri

AI things @ OpenAI - Her, GPT4V, GPT4, GPT3.5, Codex | past: NVIDIA - megatron, sentiment neurons | go bears 🐻

@TheRealRPuri

AI things @ OpenAI - Her, GPT4V, GPT4, GPT3.5, Codex | past: NVIDIA - megatron, sentiment neurons | go bears 🐻